Why comparisons must address genuine uncertainties

Too much research is done when there are no genuine uncertainties about treatment effects. This is unethical, unscientific, and wasteful.

Key Concepts addressed:- 2-8 Consider all of the relevant fair comparisons

- 2-9 Reviews of fair comparisons should be systematic

- 2-12 Single studies can be misleading

Details

The design of treatment research often reflects commercial and academic interests; ignores relevant existing evidence; uses comparison treatments known in advance to be inferior; and ignores needs of users of research results (patients, health professionals and others).

A good deal of research is done even when there are no genuine uncertainties. Researchers who fail to conduct systematic reviews of past tests of treatments before embarking on further studies sometimes don’t recognise (or choose to ignore the fact) that uncertainties about treatment effects have already been convincingly addressed. This means that people participating in research are sometimes denied treatment that could help them, or given treatment likely to harm them.

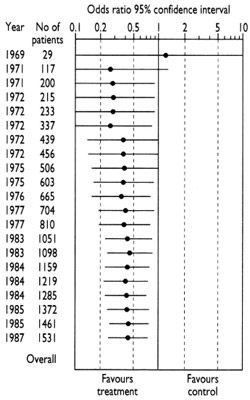

The diagram that accompanies this and the following paragraph shows the accumulation of evidence from fair tests done to assess whether antibiotics (compared with inactive placebos) reduce the risk of post-operative death in people having bowel surgery (Lau et al. 1995). The first fair test was reported in 1969. The results of this small study left uncertainty about whether antibiotics were useful – the horizontal line representing the results spans the vertical line that separates favourable from unfavourable effects of antibiotics. Quite properly, this uncertainty was addressed in further tests in the early 1970s.

The diagram that accompanies this and the following paragraph shows the accumulation of evidence from fair tests done to assess whether antibiotics (compared with inactive placebos) reduce the risk of post-operative death in people having bowel surgery (Lau et al. 1995). The first fair test was reported in 1969. The results of this small study left uncertainty about whether antibiotics were useful – the horizontal line representing the results spans the vertical line that separates favourable from unfavourable effects of antibiotics. Quite properly, this uncertainty was addressed in further tests in the early 1970s.

As the evidence accumulated, however, it became clear by the mid-1970s that antibiotics reduce the risk of death after surgery (the horizontal line falls clearly on the side of the vertical line favouring treatment). Yet researchers continued to do studies through to the late 1980s. Half the patients who received placebos in these later studies were thus denied a form of care which had been shown to reduce their risk of dying after their operations. How could this have happened? It was probably because researchers continued to embark on research without reviewing existing evidence systematically. This behaviour remains all too common in the research community, partly because some of the incentives in the world of research – commercial and academic – do not put the interests of patients first (Chalmers 2000).

Patients and participants in research can also suffer because researchers have not systematically reviewed relevant evidence from animal research before beginning to test treatments in humans. A Dutch team reviewed the experience of over 7000 patients who had participated in tests of a new calcium-blocking drug given to people experiencing a stroke. They found no evidence to support its increasing use in practice (Horn and Limburg 2001). This made them wonder about the quality and findings of the animal research that had led to the research on patients. Their review of the animal studies revealed that these had never suggested that the drug would be useful in humans (Horn et al. 2001).

Patients and participants in research can also suffer because researchers have not systematically reviewed relevant evidence from animal research before beginning to test treatments in humans. A Dutch team reviewed the experience of over 7000 patients who had participated in tests of a new calcium-blocking drug given to people experiencing a stroke. They found no evidence to support its increasing use in practice (Horn and Limburg 2001). This made them wonder about the quality and findings of the animal research that had led to the research on patients. Their review of the animal studies revealed that these had never suggested that the drug would be useful in humans (Horn et al. 2001).

The most common reason that research does not address genuine uncertainties is that researchers simply have not been sufficiently disciplined to review relevant existing evidence systematically before embarking on new studies. Sometimes there are more sinister reasons, however. Researchers may be aware of existing evidence, but they want to design studies to ensure that their own research will yield favourable results for particular treatments. Usually, but not always, this is for commercial reasons (Djulbegovic et al. 2000; Sackett and Oxman 2003; Chalmers and Glasziou 2009; Macleod et al. 2014). These studies are deliberately designed to be unfair tests of treatments. This can be done by withholding a comparison treatment known to help patients (as in the example given above), or giving comparison treatments in inappropriately low doses (so that they don’t work so well), or in inappropriately high doses (so that they have more unwanted side effects) (Mann and Djulbegovic 2012). It can also result from following up patients for too short a time (and missing delayed effects of treatments), and by using outcome measures (‘surrogates’) that have little or no correlation with the outcomes that matter to patients.

It may be surprising to readers of this essay that the research ethics committees established during recent decades to ensure that research is ethical have done so little to influence this research malpractice. Most such committees have let down the people they should have been protecting because they have not required researchers and sponsors seeking approval for new tests to have reviewed existing evidence systematically (Savulescu et al. 1996; Chalmers 2002). The failure of research ethics committees to protect patients and the public efficiently in this way emphasizes the importance of improving general knowledge about the characteristics of fair tests of medical treatments.

The text in these essays may be copied and used for non-commercial purposes on condition that explicit acknowledgement is made to The James Lind Library (www.jameslindlibrary.org).

References

Chalmers I. Current Controlled Trials: an opportunity to help improve the quality of clinical research. Current Controlled Trials in Cardiovascular Medicine 2000;1:3-8. Available: http://www.ncbi.nlm.nih.gov/pmc/articles/PMC59587/pdf/cvm-1-1-003.pdf

Chalmers I (2002). Lessons for research ethics committees. Lancet 359:174.

Chalmers I, Glasziou P. Avoidable waste in the production and reporting of research evidence. Lancet 2009;374:86-89. doi:10.1016/S0140-6736(09)60329-9.

Djulbegovic B, Lacevic M, Cantor A, Fields KK, Bennett CL, Adams JR, Kuderer NM, Lyman GH (2000). The uncertainty principle and industry-sponsored research. Lancet 356:635-638.

Horn J, Limburg M (2001). Calcium antagonists for acute ischemic stroke (Cochrane Review). In: The Cochrane Library, Issue 3, Oxford: Update Software.

Horn J, de Haan RJ, Vermeulen M, Luiten PGM, Limburg M (2001). Nimodipine in animal model experiments of focal cerebral ischaemia: a systematic review. Stroke 32:2433-38.

Lau J, Schmid CH, Chalmers TC (1995). Cumulative meta-analysis of clinical trials builds evidence for exemplary clinical practice. Journal of Clinical Epidemiology 48:45-57.

Macleod MR, Michie S, Roberts I, Dirnagl U, Chalmers I, Ioannidis JPA, Salman RA-S, Chan A-W, Glasziou P. Biomedical research: increasing value, reducing waste. Lancet 2014;383:4-6.

Mann H, Djulbegovic B (2012). Comparator bias: why comparisons must address genuine uncertainties. JLL Bulletin: Commentaries on the history of treatment evaluation (www.jameslindlibrary.org)

Sackett DL, Oxman AD (2003). HARLOT plc: an amalgamation of the world’s two oldest professions. BMJ 2003;327:1442-1445.

Savulescu J, Chalmers I, Blunt J (1996). Are research ethics committees behaving unethically? Some suggestions for improving performance and accountability. BMJ 313:1390-1393.

Read more about the evolution of fair comparisons in the James Lind Library.